AI for Open Surgery: Tool Understanding and Surgical Workflow Analysis

PI-Technion, Assist. Prof. Shlomi Laufer, PI-Rambam, Prof. Gil Bolotin, MD, Dr. Tom Friedman, MD

Objectives and Clinical Need Open surgery remains a cornerstone of clinical practice, yet it is still underrepresented in current surgical AI research. In contrast to minimally invasive procedures, open surgery takes place in highly unconstrained environments, where camera viewpoints, lighting conditions, and occlusions vary significantly. These factors pose substantial challenges for standard computer vision methods and limit the development of reliable automated solutions.

At the same time, there is a clear clinical need for systems capable of automatically analyzing surgical procedures. Such systems could support objective assessment of surgical skill, enable detailed workflow analysis, and ultimately assist surgeons during operations. However, progress in this area is constrained by the scarcity of annotated data and the inherent complexity of real surgical scenes.

Our joint work between the Technion and Rambam Health Care Campus addresses these challenges by developing markerless, data-driven methods for understanding open surgery. The core idea is to extract meaningful structure directly from surgical videos, capturing both the behavior of surgical tools and the actions performed by the surgeon.

Database

To support this research, we are collecting a unique dataset of real-world open surgery procedures at Rambam Health Care Campus. The current dataset focuses on saphenous vein harvesting performed during open heart surgery and includes recordings from multiple surgeons in real operating room conditions.

These videos capture the full complexity of open surgery and reflect the challenges encountered in real clinical environments, rather than controlled laboratory settings.

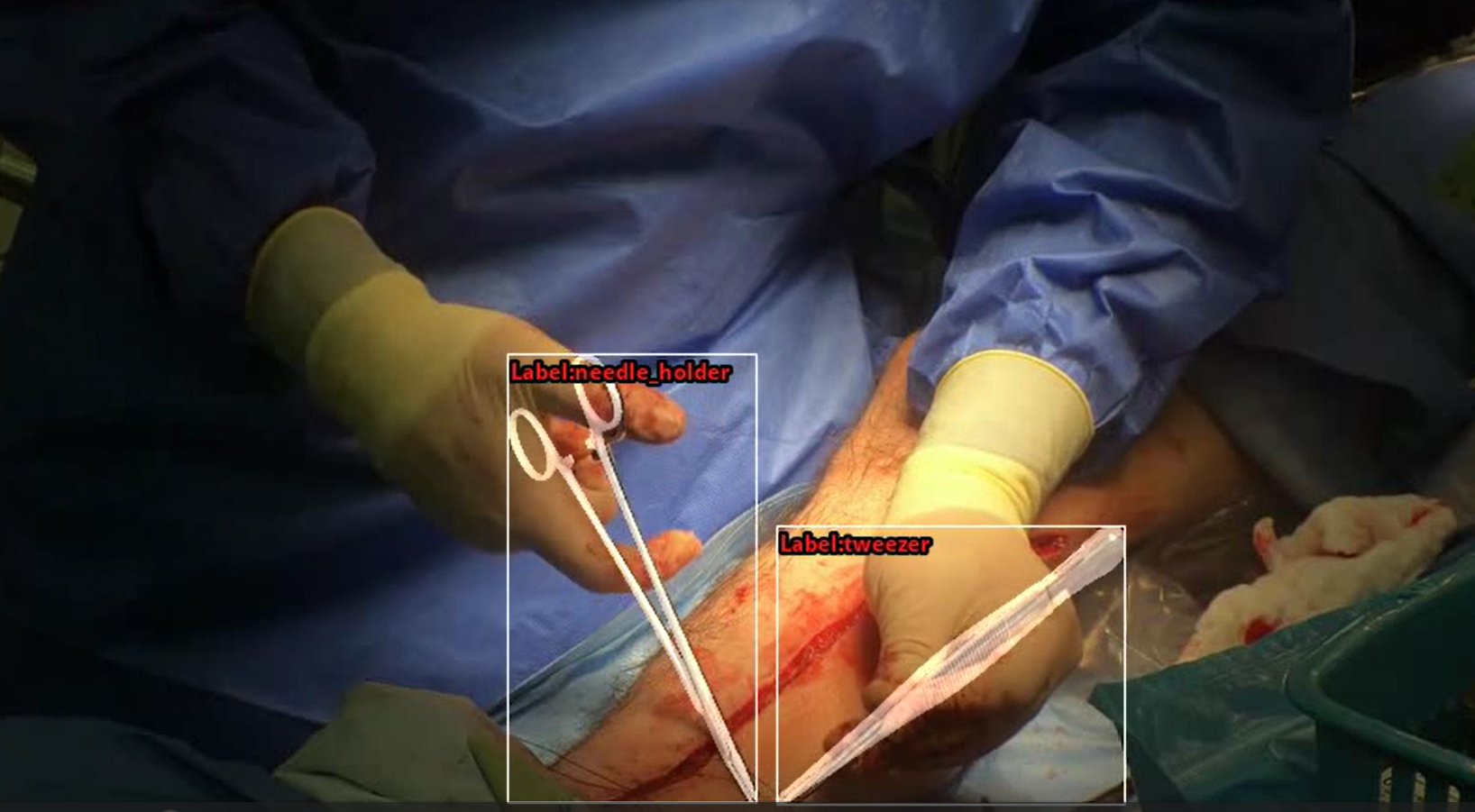

The dataset is annotated at multiple levels. We provide frame-level labels of surgical gestures, enabling fine-grained analysis of actions such as needle insertion, gripping, and passing. In addition, we generate detailed segmentation masks of surgical tools and hands, which are used for both evaluation and model development. Beyond these annotations, our pipeline derives richer representations from the data, including 6D tool pose and 3D hand pose, allowing us to move beyond raw video toward a structured understanding of surgical activity.

Research Results

Monocular 3D Pose Estimation of Surgical Tools

We developed a novel framework for monocular 6D pose estimation of articulated surgical instruments in open surgery. The goal is to recover, from a single RGB video stream, the full spatial configuration of surgical tools, including their position, orientation, and articulation.

A key challenge in this problem is the lack of annotated real-world data. To address this, we developed a synthetic data generation pipeline based on 3D scanning of surgical instruments and physically based rendering. This approach enables the creation of large-scale, fully annotated datasets that capture realistic variations in tool appearance, articulation, and interaction with the surgeon’s hands.

Building on this data, we designed a dedicated pose estimation framework that jointly predicts tool pose, category, and articulation. The model is further refined using domain adaptation techniques that leverage unlabeled real surgical videos, effectively bridging the gap between synthetic and clinical data. Importantly, this approach eliminates the need for manual annotation of real surgical images and allows new tools to be incorporated using only their 3D models.

The resulting system demonstrates strong performance on real surgical procedures, despite challenges such as occlusions, reflective surfaces, and complex tool geometries. This makes it a practical foundation for downstream applications, including surgical navigation, augmented reality, and robotic assistance.

Multimodal Surgical Gesture Recognition

Building on the extracted pose information, we further investigate the problem of surgical gesture recognition in open surgery, where the goal is to identify fine-grained actions performed by the surgeon over time.

Rather than relying solely on video data, we adopt a multimodal approach that combines visual information with both tool pose and 3D hand pose. These additional modalities provide a more structured and robust representation of the surgical scene, helping to overcome the variability inherent in operating room recordings.

Our results show that integrating these modalities leads to a significant improvement in recognition performance compared to video-only methods. Moreover, we find that models based solely on pose information can achieve performance comparable to video-based approaches, highlighting a promising direction for privacy-preserving surgical analysis.

Overall Impact

Together, these contributions establish a unified framework for understanding open surgery, spanning from low-level perception of surgical tools to high-level interpretation of surgical actions. By combining synthetic data, domain adaptation, and multimodal learning, our work demonstrates that it is possible to overcome the traditional limitations of surgical datasets and develop robust AI systems for real clinical environments.

Beyond analysis, accurate estimation of surgical tool pose has important implications for medical robotics and human–robot interaction in the operating room. Reliable knowledge of tool position, orientation, and articulation can enable safer and more precise collaboration between surgeons and robotic systems, support context-aware assistance, and improve the integration of robotic platforms into clinical workflows.

This research therefore opens the door to a range of applications, including automated skill assessment, real-time feedback systems, advanced surgical assistance technologies, and next-generation human–robot collaboration in surgery, ultimately contributing to safer and more effective patient care.

Publications:

[1] Spektor R, Friedman T, Or I, Bolotin G, Laufer S. Monocular pose estimation of articulated open surgery tools-in the wild. Med Image Anal. https://doi.org/10.1016/j.media.2025.103618.

[2] Meiraz O, Laufer S, Spector R, Or I, Bolotin G, Friedman T. Enhancing open-surgery gesture recognition using 3D pose estimation. International Journal of Computer Assisted Radiology and Surgery 2025 2026:1–9. https://doi.org/10.1007/s11548-025-03564-1

3D Reconstruction of Surgical Instruments – Synthetic Data

Needle Holder Tweezers

|

|

|